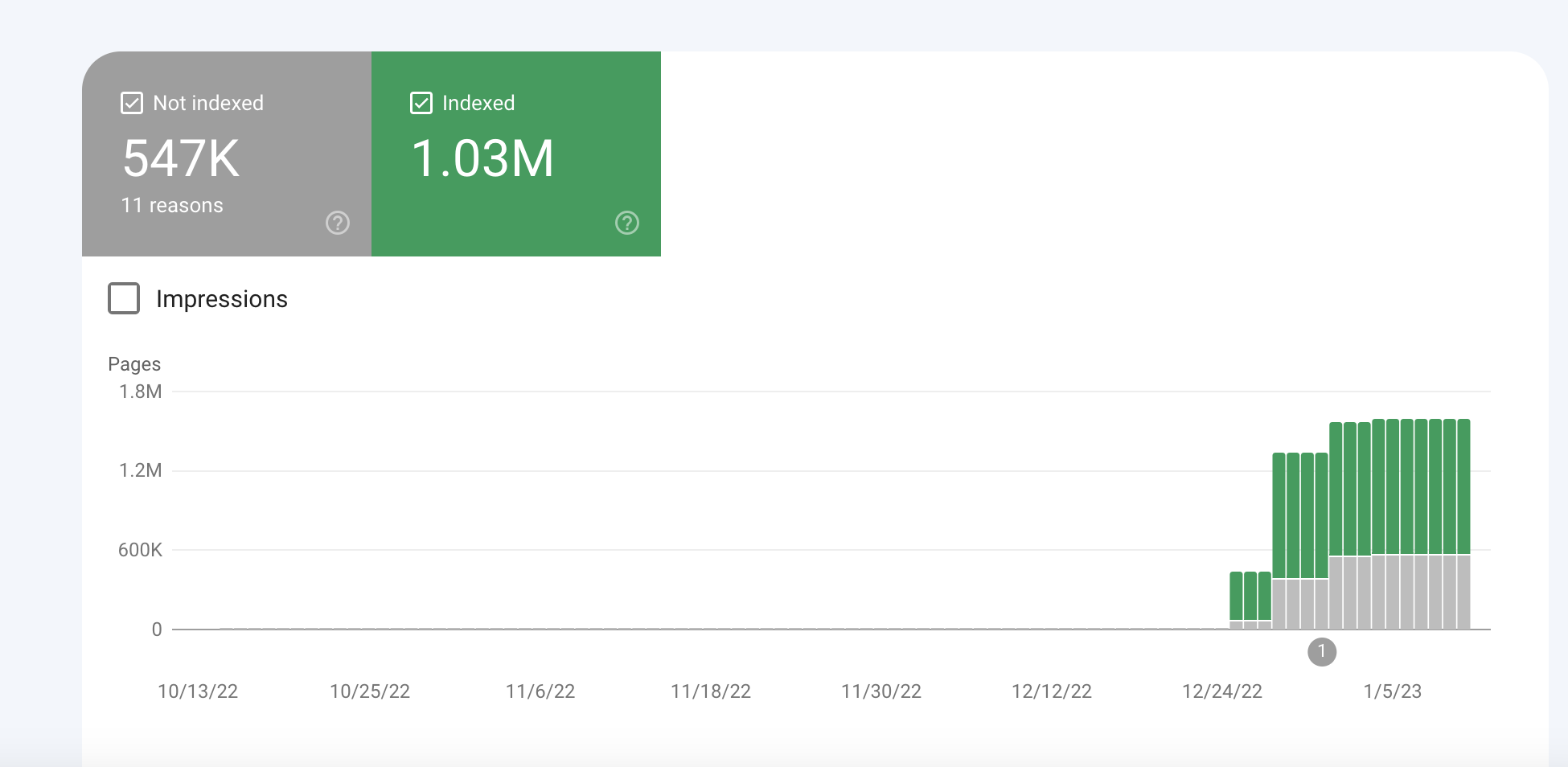

Since the end of November / early December, many Shopify brands have seen the volume of indexed pages increase substantially. Looking at only a small subset of our client accounts, we can see that indexed pages in once instance grew from 36k pages, to over 17 million, all in the space of a month.

Why is this a problem?

Crawl budget is something you might have heard an SEO say time and time again, and unless your site is extremely large, more often than not it won’t be an issue for you. However, the issue currently impacting Shopify stores is bloating the index substantially, which will be impacting the performance of your core pages (think commercial categories ££!) – & you could be losing money.

How to diagnose?

- Head to your Google Search Console profile, visit the ‘Pages’ tab which can be found in the menu on the left-hand side.

- You will then see a view which shows you the ‘Not Indexed’ & ‘Indexed’. Ensure the ‘Indexed’ tab is highlighted and the ‘Not Indexed’ is not.

- Under the graph, you’ll see a tab called ‘View data about indexed pages’ – click this

- Once in here, if you can see a sudden influx of indexed pages beginning at the end of November and growing rapidly through the month of December, you’ve likely been impacted by the spam issue impacting many Shopify sites.

- The spam URLs will be as follows; /collections/vendors?q=many-different-characters (you should be able to clearly see the spam URLs)

- URLs generated will look like collection pages, but with spam h1s & URLs, example below:

How to fix?

- Ensure that you are not using /vendors?q= for anything on your site

- Once this is verified, contact your developer and apply a Meta-Robots NOINDEX on all pages that contain /vendors?q= – this will start the process for Google to drop the newly indexed pages out the index

- You will then want to monitor this over time, once the number of indexed pages has returned to normal levels, you can then apply a robots.txt disallow which would be;

Disallow: */vendors?q=

A guide on how to edit robots.txt in Shopify can be found here.